UCLA Engineers Create Algorithm for Powerful Imaging in Low-Light Conditions

Advance can lead to improved vision-assistance and cell-tracking technologies

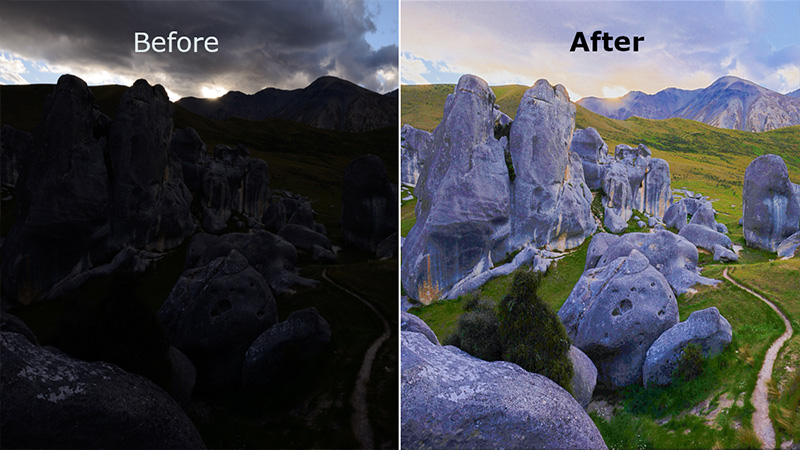

Callen MacPhee and Bahram Jalali/UCLA

Before-and-after images using the Vision Enhancement via Virtual-diffraction and coherent Detection algorithm

A team of engineers at the UCLA Samueli School of Engineering has developed a powerful computational imaging algorithm that can self-correct pictures taken with poor lighting to produce high-resolution images in real time. The algorithm enhances the visibility and color of both still images and videos at record speed.

Led by Bahram Jalali, a distinguished professor emeritus of electrical and computer engineering as well as bioengineering, the team has established a new paradigm for algorithm design where laws of physics provide a blueprint for crafting algorithms.

The team published this week its aptly named approach “VEViD,” which stands for Vision Enhancement via Virtual-diffraction and coherent Detection, in the optics journal, eLight. Callen MacPhee, a UCLA electrical engineering graduate student pursuing a master’s degree, is the co-author.

“Our new algorithm builds on more than two decades of research in optical physics in our laboratory and has direct applications in augmented reality, night-time driving, biology, and space exploration,” Bahram Jalali said.

The new methodology is in sharp contrast to both classical algorithms based on empirical hand-crafted rules and modern artificial intelligence algorithms that rely on a massive amount of computations involving millions of parameters.

“Our new algorithm builds on more than two decades of research in optical physics in our laboratory and has direct applications in augmented reality, night-time driving, biology, and space exploration,” Jalali said. “Comparison of VEViD with leading neural network algorithms shows comparable or better image quality but with one to two orders of magnitude faster-processing speed.”

The physics-inspired approach leads to a surprisingly simple and computationally efficient algorithm with exceptional image quality and processing speed. The algorithm can enhance 4K-resolution videos at more than 200 frames per second, much faster than other algorithms with similar image quality.

Under low-light conditions such as at night time, digital images suffer from low contrast and loss of information. VEViD is designed to improve these qualities for two purposes: increased visual quality for human perception and increased object-detection accuracy using machine learning. The technology has applications in security cameras, autonomous vehicles, robots, vision assistance for the elderly and live cell tracking for drug development.

The team has successfully demonstrated VEViD’s ability to perform a color enhancement and managed to pair the algorithm with a neural network trained to detect objects.

“VEViD can act as a pair of glasses for computer vision algorithms trained on daylight scenes — allowing them to see and detect objects in the dark,” MacPhee said. “This makes those networks more robust while also saving training time and energy.”

The research was supported by grants from the Parker Center for Cancer Immunotherapy and the Office of Naval Research. The UCLA team will make its codes and data available on the publicly accessible GitHub repository.